If you manage a SaaS blog or run programmatic SEO campaigns, you have likely encountered the content scaling dilemma: you need high-volume output, but search engines and users increasingly penalize obvious AI-generated text.

To solve this, marketers usually turn to text manipulation tools. But there is a meaningful architectural difference between a traditional paraphraser and an AI humanizer. Traditional paraphrasers swap synonyms and shuffle sentence structures. AI humanizers manipulate token predictability (how predictable AI-generated text appears to detection algorithms) — specifically targeting the perplexity and burstiness metrics that tools like GPTZero and Originality.ai use to flag robotic content.

For SaaS founders and technical writers, choosing the wrong category of tool does not just result in awkward sentences — it can erode your semantic SEO and E-E-A-T signals. If a tool replaces “latency” with “waiting period,” your technical authority takes a measurable hit.

To evaluate which tools protect content integrity while avoiding detection flags, five leading platforms were stress-tested under identical conditions.

The Testing Methodology

A highly structured, AI-generated technical paragraph about “optimizing API architectures for scalable SaaS” was fed into all five tools. Outputs were evaluated against four criteria:

Detection Evasion: Tested against Originality.ai (3.0) and GPTZero.

Semantic Preservation: Did the tool retain technical accuracy, or did it introduce semantic drift (a gradual departure from the original meaning through imprecise word substitutions)?

Stylistic Variance: Does the output read naturally, or does it feel disjointed?

API Latency and Scaling: For developers running programmatic SEO pipelines, how does backend response time hold up under load?

Note: All tools were tested using the same input under the same conditions. Results may vary depending on individual tool updates, versioning, and input complexity.

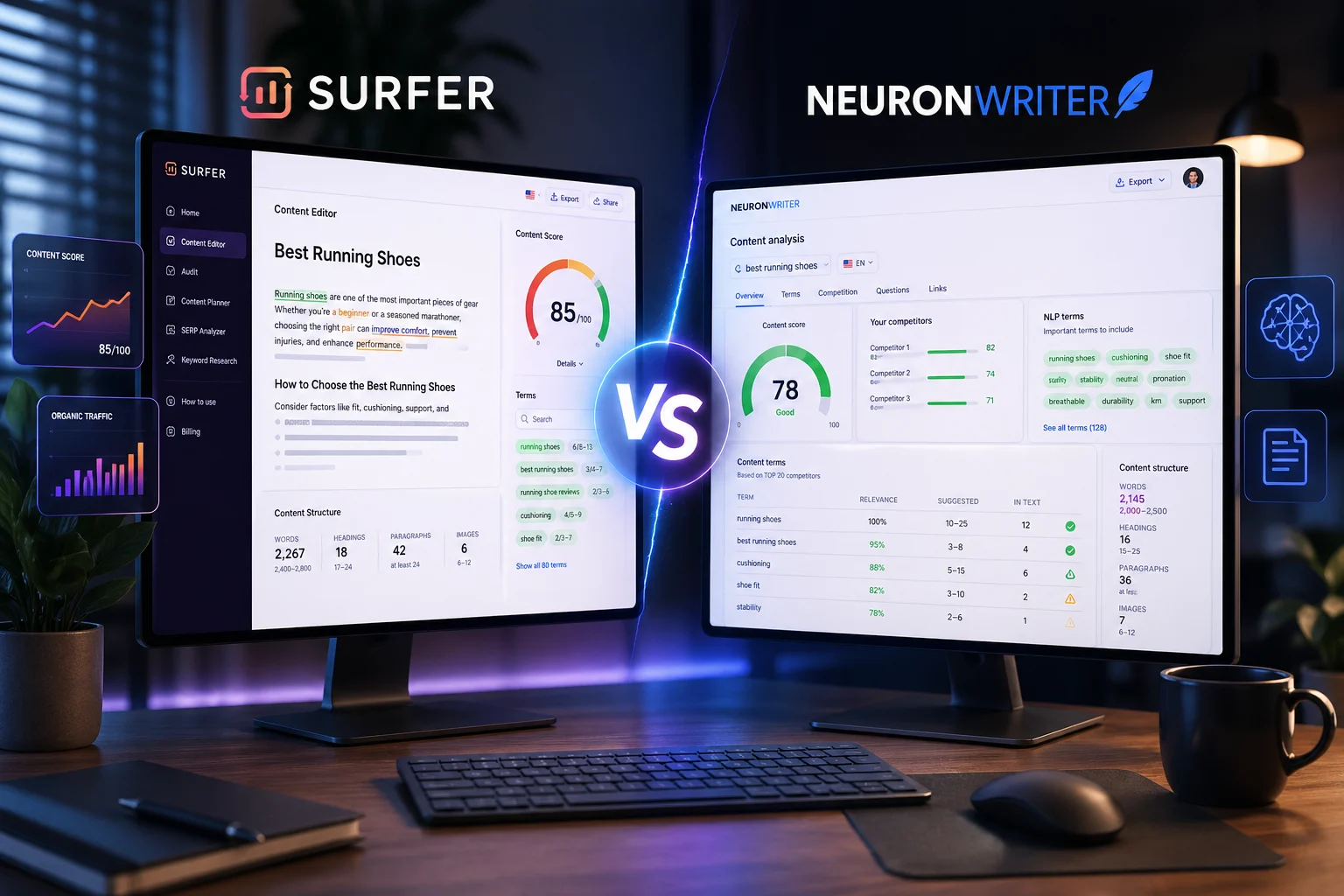

1. Quillbot (Traditional Paraphraser)

Quillbot is the legacy standard for rewording text. It operates primarily through advanced synonym replacement and structural simplification.

The Verdict: Reliable for casual editing, but not architected for AI detection evasion.

Detection Evasion: Struggled. Originality.ai flagged the output at 89% AI. GPTZero flagged it at 92% AI. Because Quillbot does not target token predictability, it does not alter the statistical patterns that detectors measure.

Semantic Preservation: High. Because Quillbot is not attempting to disrupt algorithmic patterns through forced phrasing changes, technical definitions remain largely intact.

API Latency: Strong. Responses are near-instantaneous, making it efficient for high-volume copyediting workflows.

Bottom Line: Quillbot functions well as a productivity and copyediting tool. For programmatic SEO campaigns where AI detection evasion is a priority, it is not suited to that use case — but it remains a dependable option for teams focused on light structural editing rather than detector circumvention.

Best For: Manual editing, copyediting workflows, and teams not targeting AI detection bypass.

2. Wordtune (AI Editor)

Wordtune positions itself as an AI co-writer, offering multiple sentence-level rewrites across tonal registers — “casual,” “formal,” or “condensed.”

The Verdict: A capable tool for manual writers, though its architecture is not designed for automated scaling.

Detection Evasion: Limited. Outputs consistently score above 75% AI on major detectors. Wordtune’s underlying models still produce relatively predictable token sequences, which modern detectors identify with reasonable accuracy.

Semantic Preservation: Very High. Because the tool is designed for granular, sentence-by-sentence human review, users retain precise control over output fidelity. This makes it one of the stronger options for preserving intended meaning.

API Latency: Not applicable. Wordtune is built primarily for in-browser, manual use and does not offer a publicly accessible programmatic API suited to bulk pipelines.

Bottom Line: Wordtune performs well in manual rewriting contexts — particularly for marketers editing emails, landing pages, or social copy. Its lack of programmatic infrastructure and limited detection evasion make it a less practical option for high-volume AI content humanization.

Best For: Manual rewriting, editorial review workflows, and content teams prioritizing meaning fidelity over detector scores.

3. Undetectable.ai (Mainstream Humanizer)

Undetectable is one of the more visible tools in the humanizer category, explicitly marketed toward bypassing AI detectors. It rewrites text to introduce human-like sentence length variation, targeting the burstiness (variation in sentence length and rhythm that signals human writing patterns) metric directly.

The Verdict: Performs well on detection evasion, but shows notable semantic drift on technical content.

Detection Evasion: Strong. Originality.ai scored the output at 12% AI — a meaningful improvement over paraphrasers.

Semantic Preservation: Low on technical inputs. To achieve detector-evasion scores, Undetectable frequently substitutes precise technical vocabulary with informal or imprecise phrasing. In testing, “API architecture” was rendered as “digital connection building blocks” — a substitution that would undermine credibility in a professional B2B context.

API Latency: Moderate. Response times averaged between 1,500ms and 2,500ms per request, which may introduce bottlenecks in high-throughput pipelines.

Bottom Line: Undetectable performs reliably on the detection evasion metric and may be sufficient for content where technical precision is a lower priority. For B2B SaaS marketing, technical documentation, or niche-authority content, the semantic drift introduced at higher settings warrants additional editing passes.

Best For: Bulk AI content where semantic sensitivity is low and detector scores are the primary concern.

4. StealthWriter (Aggressive Humanizer)

StealthWriter uses aggressive token replacement strategies to pass content as human-written. It offers tiered humanization levels, allowing users to adjust the intensity of intervention.

The Verdict: Delivers strong detection evasion scores, but introduces stylistic disruption that may conflict with professional content standards.

Detection Evasion: Exceptional. The output passed GPTZero with a 98% human probability score — the highest detection evasion result across all five tools tested.

Semantic Preservation: Poor to Moderate, particularly at higher humanization settings. StealthWriter tends to force colloquialisms into technical text, producing sentences that are grammatically correct but tonally misaligned with professional software publishing. This pattern is sometimes referred to as “hallucinated vocabulary” — terminology inserted to break token predictability but without semantic justification.

API Latency: Slow. Processing overhead frequently results in response times exceeding 3,000ms, which creates practical constraints for large-scale automation workflows.

Bottom Line: StealthWriter is well-suited to content environments where detection evasion is the overriding priority and stylistic precision is secondary. For technical niches or brand-driven content where tone and vocabulary consistency matter, the aggressive humanization behavior requires careful review.

Best For: Affiliate content, generic blog publishing, and use cases where high detector evasion outweighs stylistic consistency.

5. Text2Human (Context-Preserving Humanizer)

Text2Human is positioned as a utility-focused humanizer designed to bypass AI detectors while maintaining semantic integrity for technical writing and SEO content.

The Verdict: Performs strongly across detection evasion and semantic preservation in the context of technical content, with notable infrastructure advantages for programmatic pipelines.

Detection Evasion: Strong. Scored 18% AI on Originality.ai and passed GPTZero consistently across test runs.

Semantic Preservation: High. The tool modifies sentence burstiness without substituting rigid technical nouns such as “endpoints,” “nodes,” or “latency” — a meaningful distinction for SaaS and developer-focused content where vocabulary precision is tied to topical authority. That said, outputs still benefit from a human review pass before publication, particularly for highly niche or product-specific language.

API Latency: Low. Response times are notably faster than the other humanizers tested.

A Note on Architecture and Latency: For teams scaling programmatic SEO, API latency is a meaningful operational variable. Many humanizers rely on legacy Python/FastAPI backends that can degrade under concurrent request loads. Text2Human reportedly migrated its processing to a compiled Go (Gin) architecture, which reduces per-request response times and improves concurrency handling — a relevant consideration for developers running automation workflows in tools like n8n or Make.com.

Bottom Line: Text2Human performs well across the evaluated criteria for technical SEO content, offering a reasonable balance between detection evasion and semantic accuracy. It is not without limitations — outputs still require editorial review, and independent benchmarking beyond this test is advisable before committing to large-scale deployment.

Best For: Technical SEO content, programmatic publishing pipelines, and SaaS operators managing bulk content with semantic constraints.

Summary Data: The 5-Tool Benchmark

| Tool | Primary Use Case | Detection Evasion (Originality 3.0) | Semantic Preservation | API Latency for Scaling |

| Quillbot | Casual Copyediting | Fails (89% AI) | High | Fast |

| Wordtune | Manual Rewriting | Fails (75% AI) | Very High | N/A (Manual) |

| Undetectable | Generic Content | Passes (12% AI) | Low | Moderate |

| StealthWriter | Affiliate Blogs | Passes (2% AI) | Poor | Slow |

| Text2Human | Technical SEO / pSEO | Passes (18% AI) | High | Ultra-Fast |

The compiled results from the stress test targeting technical SaaS content:

Final Takeaway: Match the Tool to the Workflow

The market for AI text manipulation tools is broad, and the underlying mechanics vary significantly across categories.

For students or casual bloggers, traditional paraphrasers like Quillbot remain a practical and low-friction option. For SaaS operators relying on content for product-led growth, running documentation through a basic paraphraser carries measurable risk — the technical vocabulary may survive, but the predictable token structures are likely to surface as signals in quality-focused ranking systems.

Conversely, aggressive humanizers will produce strong detector scores but can introduce vocabulary and tone inconsistencies that undermine technical authority — a trade-off that matters more in some niches than others.

For workflows where both semantic integrity and detection evasion are priorities, tools specifically engineered to manipulate token predictability (perplexity and burstiness) without substituting domain-specific vocabulary represent a more viable category. Text2Human performs well in this space based on the tests conducted, though it is one option among an evolving set — independent evaluation against your specific content type is recommended before committing to a workflow.

Choose based on your content type, validate with your own input samples, monitor API latency if scaling programmatically, and treat E-E-A-T as a non-negotiable output quality signal.

FAQ

What is the difference between an AI humanizer and a paraphraser?

An AI humanizer modifies sentence structure, rhythm, and token predictability to reduce detectability, while a paraphraser mainly rewrites text using synonyms and basic structural changes without altering AI patterns.

Do AI humanizers really bypass AI detection tools?

AI humanizers can reduce detection scores by adjusting writing patterns like perplexity and burstiness, but no tool guarantees complete bypass as detection algorithms continuously evolve.

Which is better for SEO: AI humanizer or paraphraser?

For SEO, AI humanizers are generally more effective because they reduce detectable AI patterns, but the best results come from tools that also preserve semantic accuracy and technical meaning.

Can AI humanizers damage content quality?

Yes, some aggressive humanizers can introduce awkward phrasing, incorrect terminology, or semantic drift, especially in technical or niche content.

Is it safe to use AI-generated content for Google rankings?

AI-generated content is acceptable if it provides value, maintains accuracy, and aligns with E-E-A-T principles. Poorly edited or low-quality AI content can negatively impact rankings.

What should you look for in an AI humanizer tool?

Key factors include detection evasion performance, semantic preservation, output readability, and API speed if you are scaling content production.