BOTTOM LINE UP FRONT: DEEPSEEK OR CLAUDE?

If you need a straight answer before reading anything else — here it is.

DeepSeek is the smarter pick if you are a developer, technical founder, or API-heavy team that wants strong coding performance without paying premium prices. It punches above its weight class on benchmarks and costs a fraction of what proprietary models charge.

Claude is the better choice if writing quality, reliability, document analysis, or enterprise-grade safety are non-negotiable for your workflow. It is the more polished, predictable, and business-ready tool.

Use DeepSeek if: you are building software, running cost-sensitive pipelines, or want open-source flexibility. Use Claude if: you are producing content, handling client deliverables, or need a consistent, safety-conscious AI you can trust in production.

INTRODUCTION: WHY THIS COMPARISON MATTERS RIGHT NOW

The AI landscape shifted fast in 2026. DeepSeek went from a relatively unknown Chinese research lab to one of the most-discussed LLMs in the world — not because of hype, but because of performance. It matched and in some cases beat GPT-4 class models on key benchmarks, at a cost that made enterprise buyers and indie developers take notice.

Claude, built by Anthropic, has been the go-to for teams that care about writing quality, long-context handling, and AI safety. It has steadily grown its user base among agencies, content teams, and enterprise buyers who want reliability over raw capability.

This guide is written for founders, developers, marketers, agencies, and SMB decision-makers who are trying to figure out which tool deserves their time and budget. It is not a benchmark summary or a feature list dump. It is a practical decision framework based on real-world usage.

By the end of this article, you will know exactly which tool fits your workflow — and why.

QUICK COMPARISON TABLE: DEEPSEEK VS CLAUDE AT A GLANCE

Best For DeepSeek: Developers, cost-focused teams, technical workflows Claude: Writers, enterprise users, document-heavy tasks

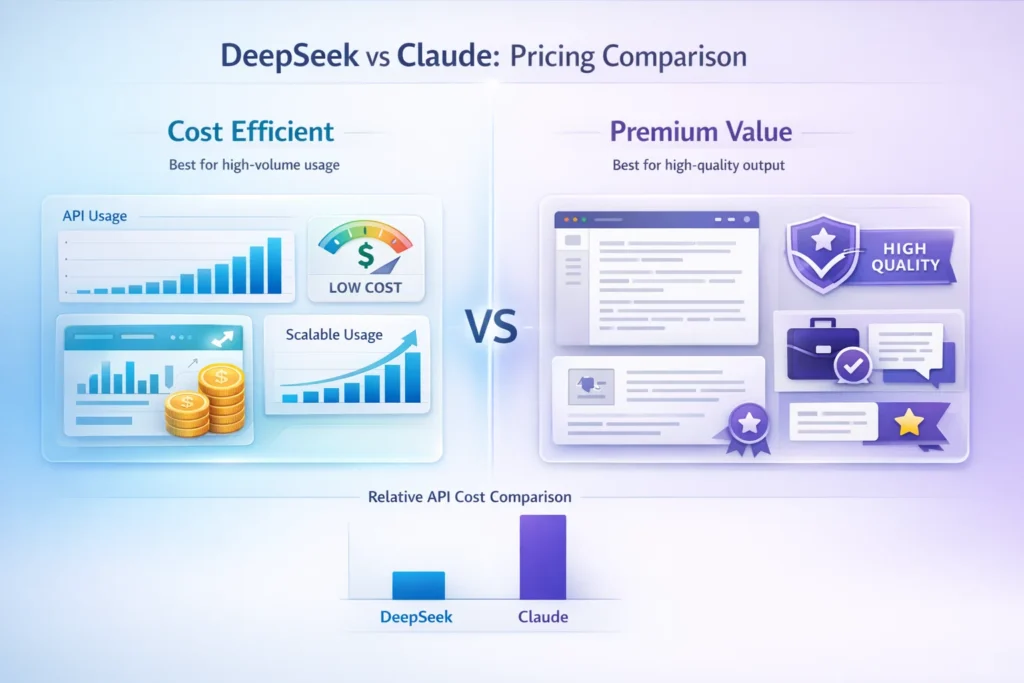

Pricing DeepSeek: Very low API cost, open-source options available Claude: Free tier + Pro at $20/month, higher API rates

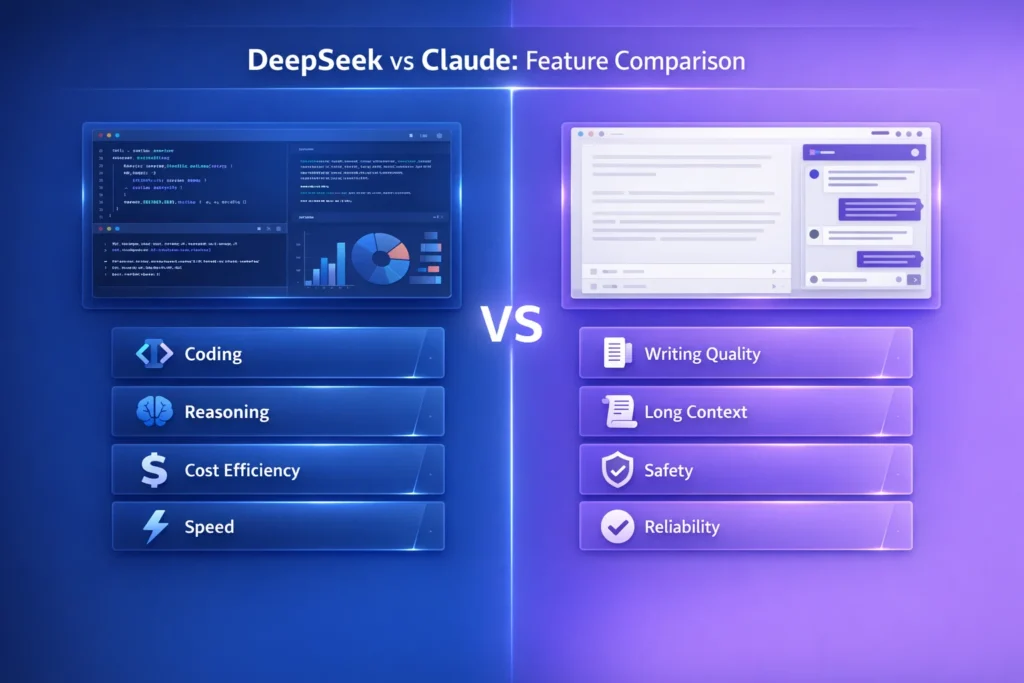

Core Strength DeepSeek: Coding, cost efficiency, reasoning at scale Claude: Writing quality, long context window, safety

Key Limitation DeepSeek: Data privacy concerns, weaker creative writing Claude: Higher API cost, occasional over-cautious refusals

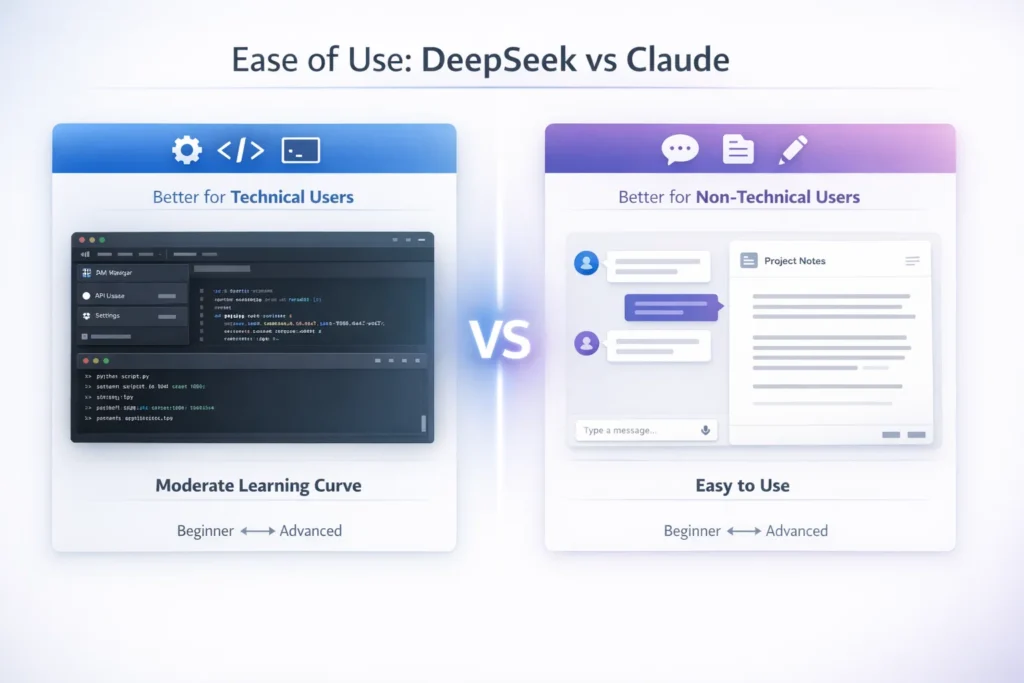

Ease of Use DeepSeek: Moderate — better suited for technical users Claude: High — accessible for non-technical teams

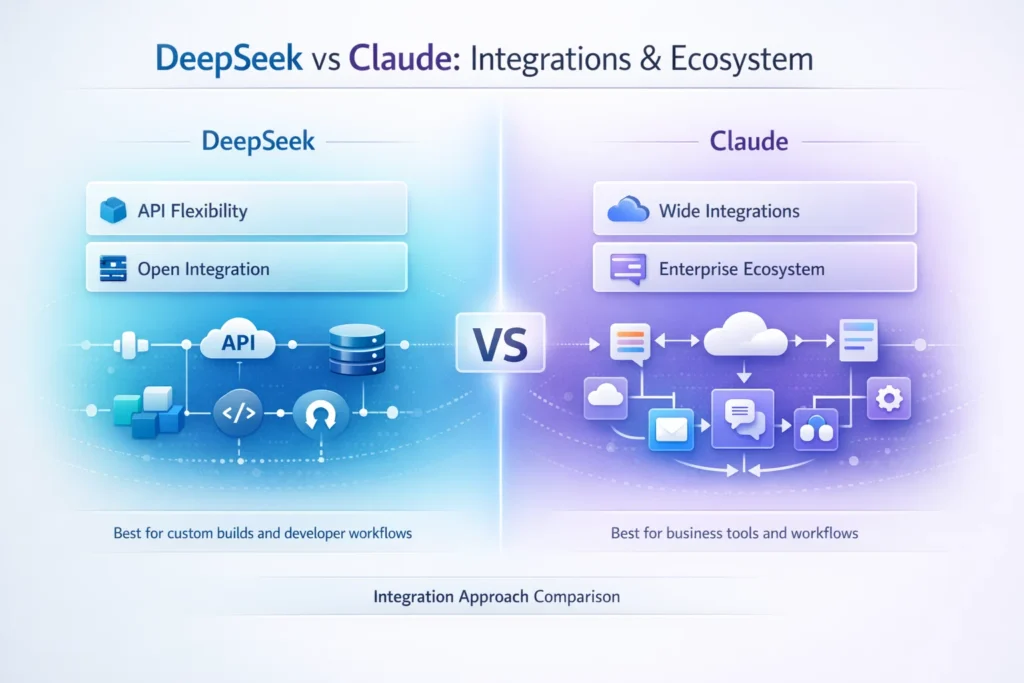

API and Integrations DeepSeek: Strong, very affordable per token Claude: Strong, widely supported across tools and platforms

WHAT IS DEEPSEEK?

DeepSeek is a large language model developed by DeepSeek AI, a Chinese research company. It positions itself as a high-performance, low-cost alternative to leading proprietary models like GPT-4 and Claude 3. The V2 and R1 variants are open-source, which gives developers the ability to self-host or fine-tune without licensing fees.

What sets DeepSeek apart is its price-to-performance ratio. On coding benchmarks like HumanEval and on mathematical reasoning tasks, DeepSeek delivers results that rival models costing ten to twenty times more per API call. For teams that run high-volume AI pipelines, this is a significant financial consideration.

Core Capabilities

DeepSeek performs particularly well in structured, logical tasks. Code generation, debugging, technical documentation, and multi-step reasoning are where the model consistently delivers. It also handles STEM-heavy content, data analysis prompts, and algorithmic problem-solving better than most models in its cost tier.

Primary Use Cases

- Software development and automated code generation

- Technical documentation and developer tooling

- Cost-sensitive production deployments with high query volume

- Research assistance in mathematics, science, and engineering

Strengths

- Open-source availability gives teams flexibility and control

- API pricing is among the lowest for a frontier-class model

- Competitive coding and reasoning performance

- Viable option for self-hosted enterprise deployments

Limitations

- Data is processed on infrastructure based in China, which raises compliance concerns for US businesses handling sensitive information

- Creative and long-form writing quality is noticeably weaker than Claude

- The consumer-facing interface is less polished and user-friendly

- Smaller community and ecosystem compared to Anthropic or OpenAI

WHAT IS CLAUDE?

Claude is a proprietary large language model built by Anthropic, a US-based AI safety company. Anthropic was founded by former OpenAI researchers with a focus on building reliable, interpretable, and safe AI systems. Claude reflects that mission at the product level — it is designed to be helpful, harmless, and honest in a way that makes it suitable for business-critical and customer-facing applications.

The Claude 3 family (Haiku, Sonnet, Opus) and the newer Claude 3.5 updates offer a range of performance tiers, with the flagship model supporting a 200,000 token context window. This makes it one of the most capable models available for document analysis, legal review, long-form research, and any task that requires processing or generating large volumes of text.

Core Capabilities

Claude’s standout capability is writing. Tone consistency, instruction-following, nuanced editing, and long-form generation are areas where Claude leads the field. It is particularly strong on tasks where output quality and brand voice matter — content marketing, communications, editorial workflows, and summarization.

Primary Use Cases

- Content creation, copywriting, and editorial editing

- Document analysis, contract review, and research synthesis

- Customer-facing applications requiring consistent, on-brand output

- Enterprise workflows where safety guardrails reduce risk

Strengths

- Best-in-class writing quality among current LLMs

- 200K context window handles entire books, contracts, or codebases

- Constitutional AI design reduces hallucination and harmful output risk

- Reliable and consistent across repeated, high-stakes prompts

- Fully US-based infrastructure, important for compliance-conscious teams

Limitations

- API pricing is meaningfully higher than DeepSeek at scale

- Can be overly cautious on edge-case prompts, sometimes refusing legitimate requests

- No open-source version — fully proprietary and Anthropic-controlled

- Slightly slower on simple or lightweight tasks compared to faster models

FEATURE-BY-FEATURE COMPARISON: DEEPSEEK VS CLAUDE

AI Model Capability: Reasoning, Coding, and Creativity

On pure coding benchmarks, DeepSeek holds its own against Claude and in several tests outperforms it, particularly on algorithmic challenges and competitive programming tasks. Claude is not a weak coder, but it is not where its edge lies.

For creative reasoning — generating narratives, handling ambiguous briefs, producing multi-layered arguments — Claude is significantly stronger. Its outputs feel more considered, more nuanced, and more ready to use without heavy editing.

Output Quality and Control

Claude produces cleaner, more polished output by default. If you give both models the same writing prompt, Claude’s response typically requires less post-editing. DeepSeek’s outputs in non-technical tasks can feel more robotic or inconsistently structured.

For technical outputs like code, API responses, and structured data, both models perform well. DeepSeek often produces cleaner, more efficient code snippets, while Claude’s code is better-commented and explained.

Context Length and Memory

Claude’s 200K token context window is one of its defining features. You can feed it an entire research paper, a 300-page contract, or a full codebase and ask it to reason across the entire document. DeepSeek offers context length that is competitive but does not reach the same level for the most demanding long-context tasks.

If your workflow regularly involves large documents, Claude has a structural advantage here.

Prompt Handling and Accuracy

Claude handles vague, underspecified, or multi-step prompts more gracefully. It asks clarifying questions when needed and interprets intent well. DeepSeek benefits more from precise, structured prompting — it performs better when you give it clear instructions and defined output formats.

For non-technical users who write prompts conversationally, Claude is more forgiving.

Speed and Performance

DeepSeek has a speed advantage on shorter, simpler tasks, particularly via API. Claude can be slightly slower at the Opus tier on complex generations. For most practical use cases, neither difference is significant enough to drive a decision — but at high API volume, DeepSeek’s speed-and-cost combination is compelling.

EASE OF USE AND LEARNING CURVE

UI and Interface

Claude.ai is a refined, well-designed consumer product. The interface is clean, the conversation flow is intuitive, and features like document upload, project workspaces, and memory make it easy for non-technical users to get value quickly.

DeepSeek’s web interface is functional but less polished. It works, but it does not have the same level of product design investment that Anthropic has put into the Claude experience. For technical users who primarily interact through the API, this matters less.

Prompt Usability

Claude is more forgiving with natural language prompts. You can write conversationally and get high-quality results. DeepSeek rewards more structured, precise prompting — which is fine for developers but creates a steeper curve for general business users.

Beginner vs Advanced Suitability

Claude is the better starting point for teams without dedicated AI expertise. Its interface, output quality, and instruction-following make it approachable for marketers, operations teams, and executives who want to use AI without becoming prompt engineers.

DeepSeek is better suited for technical teams who are comfortable with API calls, system prompts, and prompt optimization. It offers more control at the cost of a higher learning curve.

PRICING AND VALUE ANALYSIS: DEEPSEEK VS CLAUDE

Free Plan Availability

Both tools offer free tiers. Claude’s free plan provides access to Claude 3.5 Sonnet with daily usage limits. It is generous enough for light personal use but not sufficient for business workflows.

DeepSeek also offers a free tier through its web interface, and the open-source R1 model is available for self-hosting at no licensing cost.

API Pricing Comparison

This is where DeepSeek’s advantage becomes concrete.

DeepSeek API pricing sits at approximately $0.14 per million input tokens and $0.28 per million output tokens for its V3 model as of early 2026. Claude’s API pricing for Sonnet is approximately $3.00 per million input tokens and $15.00 per million output tokens.

The difference is not marginal. For high-volume use cases, DeepSeek can cost ten to fifty times less than Claude depending on the model tier and task type.

Cost Efficiency: Where DeepSeek Has a Clear Edge

If you are running an application that makes hundreds of thousands of API calls per month — a customer support bot, a code review tool, an automated content pipeline — DeepSeek’s economics are hard to ignore. The performance is strong enough that the trade-off is often worth it for technical tasks.

Claude’s premium pricing is easier to justify when output quality directly affects business results. If a poorly written email costs a client relationship, saving on API calls is a false economy.

Best Value by User Type

Bootstrapped founders running technical products: DeepSeek Content teams and agencies where quality is billable: Claude High-volume developer tools and automation pipelines: DeepSeek API Enterprise teams with compliance requirements: Claude Hybrid teams who want both: Use DeepSeek for back-end processing, Claude for front-facing output

PERFORMANCE AND RELIABILITY

Output Consistency

Claude is more consistent across repeated runs of the same prompt. If you run the same query ten times, the outputs are recognizably similar in quality and tone. This consistency is valuable for production applications where unpredictable output creates downstream problems.

DeepSeek can vary more between runs, particularly on open-ended prompts. For structured tasks with clear outputs, this is less of an issue.

Hallucination Tendencies

Both models hallucinate. Claude’s constitutional AI training reduces the frequency of confident false statements, particularly on factual and reasoning tasks. DeepSeek, while strong on benchmarks, can produce plausible-sounding but inaccurate outputs on topics outside its strong performance areas.

For any application where factual accuracy matters, both tools require a verification layer. Claude is the lower-risk choice for high-stakes factual tasks.

Stability and Uptime

Anthropic has invested significantly in infrastructure reliability. Claude has enterprise SLA options and a track record of uptime suitable for production deployments. DeepSeek’s infrastructure is newer, and while the API has been stable for most users, it does not yet have the same enterprise-grade reliability guarantees.

Known Limitations to Watch

DeepSeek: Data privacy is a real concern for US companies working with sensitive customer data, proprietary information, or regulated industries. Queries sent to DeepSeek’s API are processed on Chinese infrastructure. Verify your compliance requirements before deploying.

Claude: Occasional over-refusals can interrupt workflows, particularly for edge-case prompts that touch on sensitive topics. Teams that need maximum flexibility may find Claude’s guardrails frustrating in certain scenarios.

INTEGRATIONS AND ECOSYSTEM

API Access

Both models offer well-documented APIs with standard REST interfaces. DeepSeek’s API is compatible with OpenAI’s API format in many cases, making migration or parallel testing straightforward for developers.

Developer Ecosystem

Claude benefits from Anthropic’s relationships with major platforms. It is natively integrated into tools like Amazon Bedrock and is accessible through a range of SDKs with strong documentation and active community support.

DeepSeek has a growing developer community, particularly in open-source circles. The self-hosted R1 model has attracted significant experimentation from developers who want control over their AI stack.

Third-Party Tools

Claude has native or official integrations with productivity platforms including Notion, Slack, and Zapier. These integrations make it easier to embed Claude into existing business workflows without custom development.

DeepSeek’s third-party integrations are more limited at the consumer level, though its API compatibility makes it usable within any system that supports OpenAI-style API calls.

Workflow Compatibility

For teams already embedded in the broader AI tooling ecosystem — using tools built around OpenAI or Anthropic APIs — Claude is easier to plug in. For teams building their own infrastructure and willing to do integration work, DeepSeek is a viable and cost-efficient foundation.

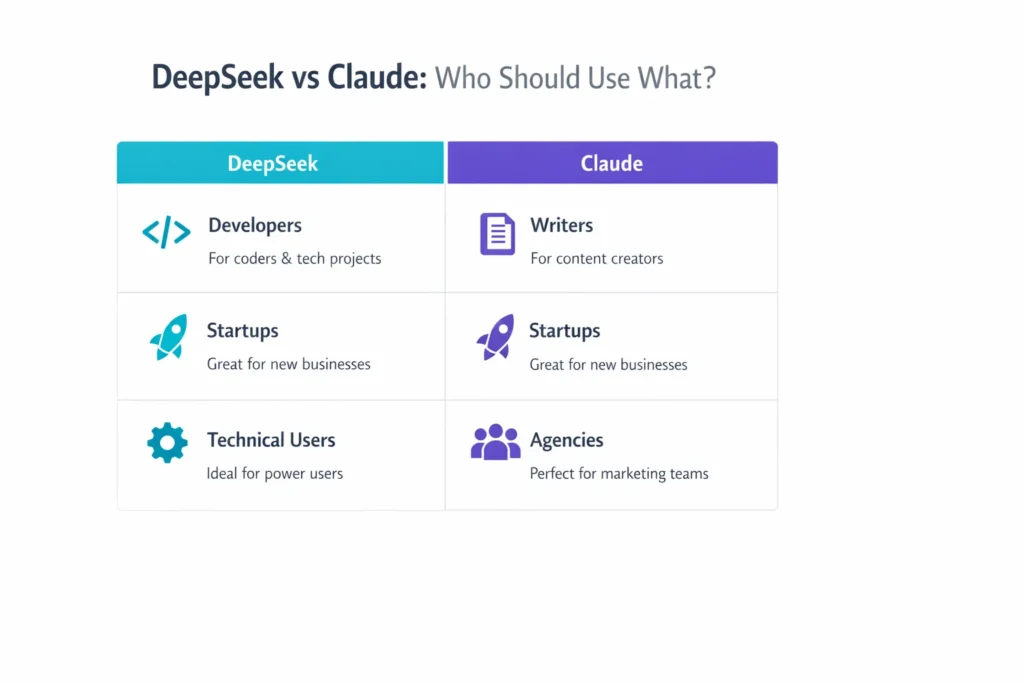

USE CASE BREAKDOWN: WHICH TOOL WINS FOR YOUR ROLE?

Developers and Coders

DeepSeek is the stronger choice. Its coding benchmarks are competitive with GPT-4 class models, and the API cost makes it practical for development tools, code review automation, and AI-assisted IDEs at scale. Claude is capable in code but does not have the same cost advantage for development-heavy workflows.

Content Creators and Writers

Claude wins without much debate. The output quality, tone consistency, and instruction-following make it the preferred tool for anyone producing written content professionally. Blog posts, ad copy, email sequences, social content — Claude produces output that requires less editing and better reflects the intended voice.

Startups and Bootstrapped Founders

The answer depends on what you are building. If your product is technical — a dev tool, a data product, an API-driven application — DeepSeek gives you production-level AI at startup-friendly costs. If your product is customer-facing and requires polished communication, Claude is worth the investment.

Agencies

Most agencies should default to Claude for client deliverables. Output quality directly affects client perception, and Claude’s reliability reduces the risk of embarrassing outputs. DeepSeek can be used for internal workflows, research, and cost reduction on tasks that do not touch clients directly.

Enterprise Workflows

Claude is the enterprise-ready choice. US data residency, strong compliance positioning, SLA options, and Constitutional AI safety design make it appropriate for regulated industries, enterprise sales environments, and any deployment where a harmful output carries legal or reputational risk.

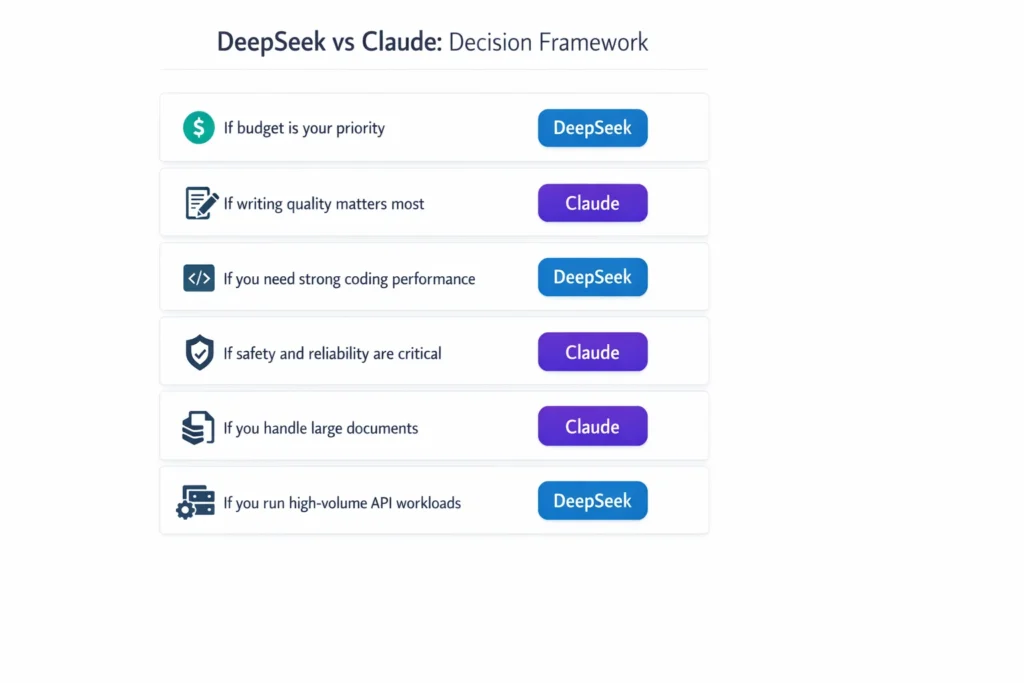

DECISION FRAMEWORK: HOW TO CHOOSE BETWEEN DEEPSEEK AND CLAUDE

If budget is the top priority, choose DeepSeek. The API pricing difference is substantial, and for technical use cases the performance trade-off is minimal.

If writing quality and tone consistency matter, choose Claude. The gap in creative and editorial output is real and meaningful for any workflow where language quality drives results.

If coding is your primary use case, choose DeepSeek. It performs at or above Claude on most coding benchmarks and does so at a fraction of the cost.

If safety, compliance, or US data residency are requirements, choose Claude. There is no ambiguity here — for regulated industries and enterprise compliance, Claude is the appropriate choice.

If you are scaling with heavy API usage, run a cost-per-output analysis. DeepSeek almost always wins on volume economics for technical tasks. Claude may still be justified for output-quality-sensitive tasks even at scale.

If ease of use for a non-technical team is the priority, choose Claude. The interface, the instruction-following, and the output quality make it more accessible for teams without dedicated AI expertise.

PROS AND CONS SUMMARY

DeepSeek Pros

- API pricing is among the lowest available for a frontier-quality model

- Open-source R1 and V2 variants allow self-hosting and fine-tuning

- Strong coding and mathematical reasoning performance

- OpenAI API compatibility simplifies integration for technical teams

DeepSeek Cons

- Data processed on Chinese infrastructure raises compliance concerns for US businesses

- Weaker performance on creative writing, editorial tasks, and nuanced long-form content

- Less polished consumer product and smaller support ecosystem

- Higher variability in output quality on open-ended prompts

Claude Pros

- Best-in-class writing quality and instruction-following among current LLMs

- 200K token context window handles the most document-intensive workflows

- Lower hallucination risk on factual and high-stakes tasks

- US-based infrastructure with enterprise-grade reliability and compliance options

- Wide third-party integrations and strong developer ecosystem

Claude Cons

- API pricing is significantly higher, particularly at scale

- Occasional over-refusals on edge-case prompts disrupt some workflows

- Fully proprietary — no self-hosting or open-source version available

- Can feel slower on simple or low-complexity tasks

FINAL VERDICT: DEEPSEEK VS CLAUDE

There is no universally correct answer here, and any article that tells you otherwise is oversimplifying.

DeepSeek is a legitimate, powerful tool — not a cheap knockoff. For developers, technical teams, and cost-conscious builders, it offers real value that is hard to dismiss. The fact that it is open-source and API-compatible with OpenAI standards makes it genuinely useful for production deployments.

Claude is the more complete product for the broadest range of business users. The writing quality, reliability, safety design, and ecosystem support make it the lower-risk, higher-quality choice for teams where AI output represents the business.

Best for developers: DeepSeek Best for content and writing: Claude Best for budget-conscious teams: DeepSeek Best for enterprise and compliance: Claude Best overall for most business users: Claude, with DeepSeek as a cost-optimized complement for specific technical tasks

If you are unsure, start with Claude’s free tier for your writing and client-facing tasks. Test DeepSeek’s API on your development and back-end workflows. Let your actual use case — not benchmarks or hype — make the decision for you.

FAQS: DEEPSEEK VS CLAUDE

Is DeepSeek better than Claude?

It depends on the task. DeepSeek outperforms Claude on coding benchmarks and is significantly cheaper. Claude outperforms DeepSeek on writing quality, long-context handling, and reliability for business-critical tasks. Neither model is universally better — your use case determines the winner.

Can DeepSeek replace Claude?

For technical and developer use cases, DeepSeek is a credible replacement and in many scenarios the smarter choice. For writing, editorial, content creation, and enterprise-grade work, Claude remains the stronger option and is not easily replaced by DeepSeek’s current capabilities.

Which is cheaper, DeepSeek or Claude?

DeepSeek is substantially cheaper. Its API pricing is roughly ten to fifty times lower than Claude’s depending on the model tier and task volume. DeepSeek also offers open-source models at no licensing cost. Claude’s free tier is generous for personal use, but API costs are significantly higher at scale.

Which is better for coding, DeepSeek or Claude?

DeepSeek is better for coding. It performs at or above Claude on standard coding benchmarks like HumanEval and is far more cost-efficient for development-heavy API use. Claude is capable in code but does not hold the same advantage in this category.

Which is better for content writing, DeepSeek or Claude?

Claude is better for content writing. It consistently produces more polished, nuanced, and on-brand output for marketing, editorial, and communications use cases. The gap in writing quality is meaningful and noticeable in real-world use.

CONCLUSION

DeepSeek and Claude are both serious tools. The choice between them is not about which one is smarter — it is about which one fits your work.

If your core need is technical, cost-sensitive, or developer-focused, DeepSeek deserves a genuine evaluation. The benchmarks are real, the pricing advantage is real, and the open-source flexibility is genuinely useful.

If your core need is writing quality, business reliability, long-context understanding, or enterprise-grade safety, Claude is the better investment. The higher cost reflects a meaningfully better product for those specific outcomes.

The most practical approach for many teams is not an either-or decision. Use DeepSeek where cost and coding matter. Use Claude where quality and trust matter. Test both on your actual workflows before committing, and let results — not reputation — guide your final choice.